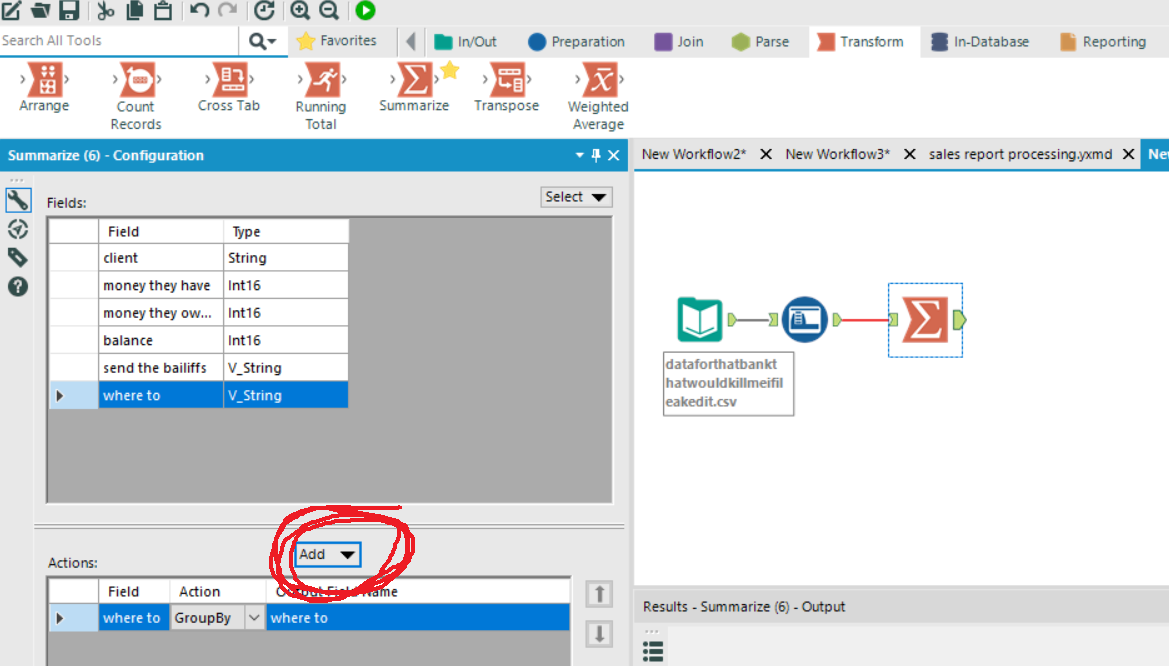

Please let me know how this works for you. Perform a variety of mathematical calculations. Group a column of data by identical values. Return the minimum or maximum value in a column. If your field name contains a 'SPACE', the results that the workflow will return will rename the field name with an underscore '_' replacing the space. The sum is calculated by adding all of the rows in the column. With the data that you provided in your screenshot, there shouldn't be any problems. If you add a non-numeric column (or a column that contains a character not in that column will be skipped. If you run this module with 356 columns or 3,560 columns of data it will provide you with the "Subtotal" of the day's values with a row for each "Trigger". Transpose the data into "Trigger" + "Name" + "Value" records.I did this to clearly show you that the dynamic select was keeping only the data types that you care about. Join the data together (based upon record #).You will probably want to allow all numeric data types. Dynamically Select "DOUBLE" elements from the 2nd set of data (#3).The unknown checked will bring in new columns of data if present. Keep known (double) values + unknown (*) checked.Keep 'Trigger' field as a key in 1 stream of data.The way that I've constructed the data was to: If you replace the TEXT INPUT tool with your data, it will create two (2) versions of your summarized data (vertical and horizontal). Last: Returns the last record in the group, based upon its record position.Here is a workflow that might help you. This is different than a zero or an empty string.įirst: Returns the first record in the group, based upon its record position. Count: Returns the count of records in the group.

The sum is calculated by adding all of the values of a group. Sum: Returns the sum value for the group. If no Group by field is specified, the entire file will be summarized. Any non-blob or spatial object has this option. Null means there is no value set for the record. All of the resulting data from the records in a group are then summarized. Null means there is no value set for this record (different than a zero or an empty string).Ĭount Null: Identical to Count, except it only counts those records that are null. This is different than a zero or an empty string.Ĭount Distinct: Returns the count of unique records in the group.Ĭount Distinct Non Null: Identical to Count Distinct, except it is only counting those records that are not null. Null means there is no value set for the record. The sum is calculated by adding all of the values of a group.Ĭount: Returns the count of records in the group.Ĭount Non Null: Identical to Count, except it is only counting those records that are not null. Here is an example of how order might change. If you are not specific about data order it shouldnt matter. If no Group by field is specified, the entire file will be summarized. Order of Groupby would matter if you are looking for a specific data order. All of the resulting data from the records in a group are then summarized. If you're able to post your workflow as well as some sample data, happy to take a look at where else efficiencies can be gained. You can use summarize tool using group by to all the data and total for the.

It seems like as soon as the summarize tool gets to a certain character count, it cuts the. However, at a certain character, instead of listing the other rows, it replaces them with '.'. Currently, I'm trying to concatenate a few rows into one row using the summarize tool. You can cache your workflow by right clicking at a place where you want to 'freeze' the data, so the summarize only needs to run once. Summarize tool does not list all rows grouped. Group By: Combines database records with identical values in a specified field into a single record. I suggest letting the process run on it's own.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed